Applies to Platform: UTM 3.0, 5.0 and later

Last Updated: 13th October 2017

This lesson will illustrate the necessary steps to configure a very simple transparent web proxy on a typical Endian appliance. A transparent web proxy requires no client-side changes to operate effectively, since all traffic is transparently redirected. The primary purpose of the web proxy is to (1) allow for a simple method to filter web traffic to appropriate levels for business and (2) provide accountability for user web traffic.

The scenario proposed will set up on the GREEN zone an HTTP Proxy that will block all websites matching enabled WEB filter categories,or custom blacklist

Enable the HTTP Proxy

The first step is to enable the web proxy by clicking the grey button (which will turn green when enabled). Once this is done, you can configure the networks you want to be filtered transparently: in this example we activate it only on the GREEN network, the setup for the other zone is analogous. You can configure a few options, including the language used to show error messages shown to users, and the size in KBytes of the files allowed to pass the proxy.

Configure the Log Settings

To configure the log settings, open the panel by clicking on the Log settings, then choose what to log. Each of the various options will increase the size of the log file, hence more you decide to log, the more space you will need on the disk. In particular, the two options Useragent logging and Firewall logging will sensibly increase the log files. As a rule of thumb, for a normal usage, only the HTTP Proxy logging is a good choice: Additional options can be activated as debugging options. Once done, click on Save and then on Apply to proceed.

Configure a Web Filter Profile

A WEB filter profile allows to define lists of remote resources to be used in Access Policies (see next section for more). On the one side, you can define a list of domains or webpages that must be always blocked or bypassed, on the other it is possible to enable one or more categories of the URL filter, to block pages based on the content.

In order to configure web filtering using URL Blacklist, first ensure the HTTP antivirus is enabled by checking the appropriate box. Then, you can select the whole category to block by clicking the green arrow or, alternatively, you can drop down the subcategories and select those individually in order to block some and not others.

The Safesearch Enforcement is a server-side function of most search engines that acts similarly to the URL blacklist filtering. Results from searches on web search engines, indeed, may include results that would normally be blocked by the URL filter. When enabled on Endian, Safesearch will not send back search results that would be normally blocked by an URL filter. However, since all search engines have already switched to HTTPS protocol, this enforcement is effective only if also the HTTPS Proxy in Decrypt and scan mode is enabled.

Note

Click Update Profile and then Apply the changes to proceed.

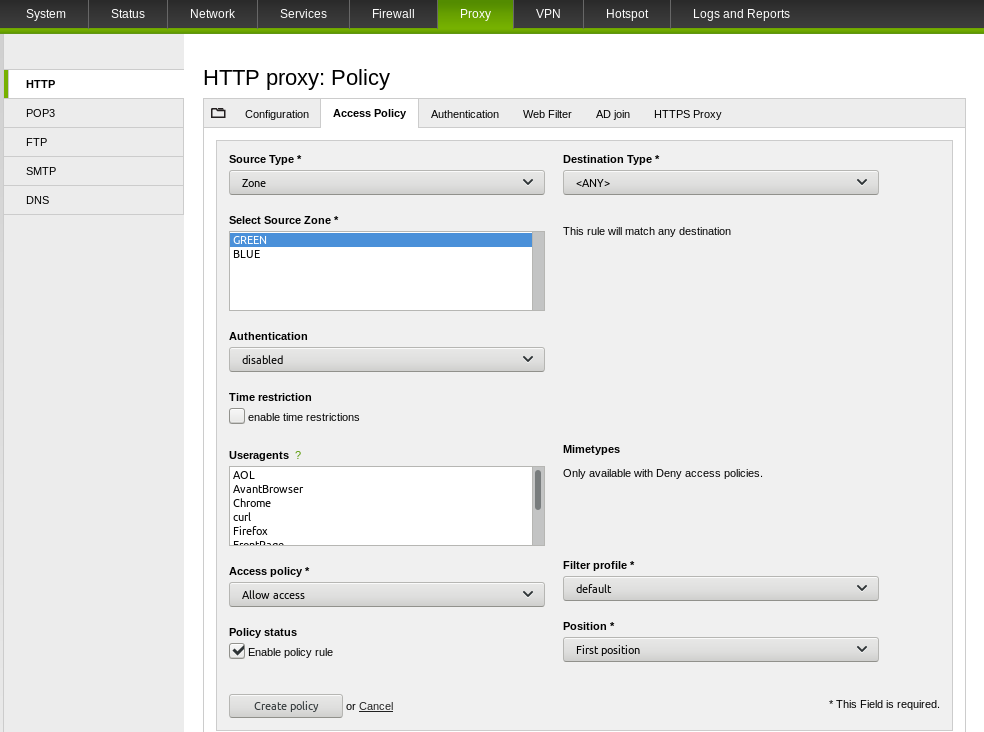

Configure the Access Policy

In order to block also SSL traffic first you should configure the HTTPS proxy. You can find more details about this in the following article. If HTTPS proxy isn't enabled, all HTTPS websites will be allowed.

We are creating a simple policy for the Green zone (entire network) that will use the WEB filter profile (default) that we just configured in the previous step.

Click Create Policy and then Apply the changes to finalize the configuration.

Test the Web Proxy

You can test your configuration now by browsing the Internet from the Green network and you should see a block page on all sites except those allowed, which should work normally.

Verify Logging

You should also be able to view all the web traffic in real-time by going to Logs and Reports > Live Logs > HTTP proxy.

Troubleshooting

There are a number of reason why the proxy does not work as expected, or seems to not filtering the traffic as expected. Here are a few suggestions about what to check and how to solve the problem.

Verify that squid is running

To verify that the HTTP Proxy is running and listening on the correct ports (default: 8080), execute the following commands. Their output must be similar to the one shown:

root@endian:~ # netstat -plnt|grep squid

tcp 0 0 192.168.100.201:8080 0.0.0.0:* LISTEN 5578/(squid-coord-3

tcp 0 0 172.16.20.1:8080 0.0.0.0:* LISTEN 5578/(squid-coord-3

tcp 0 0 192.168.100.201:18080 0.0.0.0:* LISTEN 5578/(squid-coord-3

tcp 0 0 172.16.20.1:18080 0.0.0.0:* LISTEN 5578/(squid-coord-3

If you changed the port from its standard 8080 value, make sure it appears in the results.

root@endian:~ # ps www -C squid

PID TTY STAT TIME COMMAND

5573 ? Ss 0:00 squid -f /etc/squid/squid.conf

5578 ? S 0:01 (squid-coord-3) -f /etc/squid/squid.conf

5579 ? S 0:00 (squid-disk-2) -f /etc/squid/squid.conf

5580 ? S 0:01 (squid-1) -f /etc/squid/squid.conf

If the output of ps is empty, then squid is not running. If no error message has been displayed on the GUI, you should check the log file.

Check the log files

If squid is not running, the log file /var/log/squid/squid.out will contain the reason why the proxy did not start. At the bottom of the log file, that you can read it using the less command, you will find something like:

2017/09/26 14:28:17| ERROR: 'windowsupdate.microsoft.com' is a subdomain of '.microsoft.com'

2017/09/26 14:28:17| ERROR: You need to remove 'windowsupdate.microsoft.com' from the ACL named 'to_rule0'

FATAL: Bungled /etc/squid/squid.conf line 123: acl to_rule0 dstdomain "/etc/squid/acls/dst_rule0.acl"

Squid Cache (Version 3.4.14): Terminated abnormally.

The bold line shows that squid is not running, and in fact it did not start at all, while the lines above show the reason why it could not start: The error in this case is the presence of a domain and one of its subdomains in the same access policy rule: one of them must be removed.

Whenever you find any FATAL: error message, it will give you an indication of what's going wrong.

Use squid's debugging option.

You can check the current squid configuration using it's integrated debug option:

root@endian:~ # squid -f /etc/squid/squid.conf -k parse

This command runs the whole startup scripts of squid and reads its configuration: If something is wrong, it will end with messages similar to those that can be found in the /var/log/squid/squid.out log file and point you to the configuration error. If no error is reported, then the HTTP proxy will start.

Check the order of the Access Policies

The rules defined under the Access Policies tab are checked in the top-bottom order in which they are displayed in the GUI. As soon as one match is found, the proxy exits the chain and further policies are not taken into account. In this case, try to change the order of the rule.

Commenti